QArmy vs. QAnon Debunkers: The role of confirmation bias

In this paper, I will examine how one article - which explains the American pro-Trump conspiracy theory QAnon - was taken up by debunkers of the theory (non-believers) and the QArmy (believers). The article in question was published by the online news site The Daily Beast, and it influenced discourses of the two oppositional communities online in different ways.

The objective is to understand how a topic (QAnon) becomes a matter of concern, particularly in the online environment. The importance of this goal lies in that it helps us understand how different people perceive the (same) world in often contrasting or distinct ways, and what that means in an increasingly digitalized world in which online phenomena have offline consequences.

QAnon, an American conspiracy theory

So what is QAnon? QAnon is a conspiracy theory embraced by Trump supporters in the US and an online New Right activist community, as argued by Blommaert and Procházka (2019). Valverde (2018) explains that Q “traces back to an anonymous online persona claiming to be a government insider on a mission to expose the ‘deep state’ allegedly working against Trump.” It operates by posting vague and cryptic clues ("breadcrumbs") on fringe online forums like 4chan or 8chan.

By using the method of digital issue mapping, my concern is to unveil how a topic becomes a matter of concern. To do this, I will begin by defining the actors involved, their statements, and the networks created around the article published by The Daily Beast. More precisely, I will focus on two Twitter accounts, that of Will Sommer (who is also the writer of said article) and that of QBeliver Jordan Sather. The choice is based on the fact that we can identify these two actors as the dominant voices in the matter at hand. In fact, their tweets on the matter got the biggest amount of attention and engagement. This conclusion was drawn using CrowdTangle, a platform that enables the user to identify influential and meaningful posts based on social media metrics.

QAnon is a conspiracy theory embraced by Trump supporters in the US and an online New Right activist community.

Additionally, I will take into account the reaction of the general public as portrayed on the online networking platform Reddit, where a thread about the article I am interested in exists. Here, we can get some insight into how people try to make sense of the information at hand. As Blommaert and Procházka (2019) explain “the Internet is the place where conspiracy theories emerge and grow, before being moved into mainstream media”; for this reason, particular focus will be put into using online sources in this article.

Debunkers of QAnon and framing

Let us see in what way people report about QAnon. Who are they? And why do they share facts about QAnon? Moreover, what is the intended audience of the article in question?

The article "What Is QAnon? The Craziest Theory of the Trump Era, Explained" was written by Will Sommer and published on The Daily Beast, a news site that defines itself as “Independent. Irreverent. Intelligent.” delivering “award-winning original reporting and sharp opinion in the arena of politics, pop-culture and power. Always skeptical but never cynical”.

As the title gives away, the writer is sharing the article with the purpose of explaining the QAnon theory. By looking at the language used, we realize that Sommer is framing the theory in a particular way, namely as “crazy”. We have to understand the title choice as being connected to sentiment polarity in contemporary media, which aims at clickbait-worthy and controversial titles to catch the audience's attention. This approach is common to contemporary news work, as people value more a headline that is either extremely positive or negative in comparison to one that is nuanced. Adding to this, the remark “and you thought pizza gate was nuts” gives away the value-judgment of the reporter, who clearly does not believe in the conspiracy theory.

What I seek to do is try to understand how people who believe in QAnon make sense of the world, how certain narratives become accepted by them as "knowledge", and how online (social) media is involved in this process.

For starters, framing plays an important role in transmitting a message. According to Entman (1993, p. 2), “to frame is to select some aspects of a perceived reality and make them more salient in a communicating text, in such a way as to promote a particular problem definition, causal interpretation, moral evaluation, and/or treatment recommendation for the item described”. In other words, frames operate by highlighting some bits of information (selection) while omitting other aspects of reality (salience). For this reason, framing has the power to influence people’s judgments.

In the article, we find many examples of framing such as the following: "since Q could be anyone with internet access and a working knowledge of conspiracy theories, there’s no reason to think that Q is a member of the Trump administration rather than, say, a troll or YouTube huckster. But incredibly, lots of people believe it". Here, we encounter a skepticism towards the theory, which is present throughout the article.

Language creates frames through which we can look at the world and make sense of it.

This points to the importance of language use in the context of politics in a mediatized environment. As mentioned by Maly (2019), politics, language and media are highly interrelated. We can say that politics is an on-going mediatized debate, where language plays a crucial role. Indeed, language creates frames through which we can look at the world and make sense of it. In politics, we encounter a discursive battle for meaning, where people constantly fight for the normalization of their opinion, wanting their worldview to be perceived as "truth". (Maly, I., 2016).

QArmy's conceptual framework

Generally speaking, conspiracy theories do not look at facts; instead, they look for patterns. Therefore, when it comes to conspiracy theories, debunking a fact does not help with proving them wrong. On the contrary, trying to disprove their statements will only legitimize their concerns. This happens because debunking a fact means paying attention to a topic. Thus, people feel authorized to think there must be some truth to their concern, or those in power wouldn't be trying so hard to disprove their statements.

Moreover, as Dentith (2014) writes, “conspiracy theories online are now phrased in vague and less precise ways in order to avoid being easily falsified”. Adding to this, there is a general principle, as presented by the political scientist Brendan Nyhan on a video for Vox, that "as human beings, we are all skeptical of information that seems to contradict some existing value or belief or attitude that we have. And so we can be unduly skeptical of that information that is unwelcome” (Vox, 2017, 2:17)

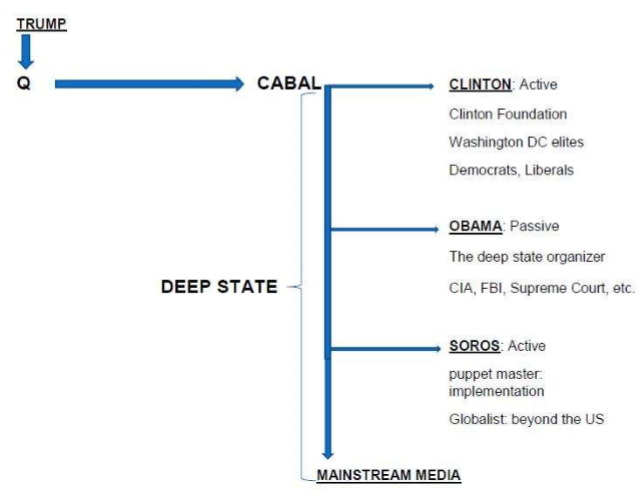

For conspiracy theorists, truth is typically hidden by powerful opponents and demands to be revealed. Varis (2018) mentions how there are “websites and forums, YouTube videos and Reddit threads, beckoning you to wake up and see the truth behind the appearances, behind all the disinformation and lies, fakes upon fakes”. In this sense, QAnon also has a conceptual frame filled with premises and assumptions through which it operates and performs its actions (see Figure below).

According to this framework, Cabal actors (Hillary Clinton, Barack Obama, George Soros, and the mainstream media) are the real powers that control the US and the world. The overall assumption of the conspiracy theory is that mainstream news is fake, and that fact-checking must reveal the involvement of the Cabal actors, an alliance also qualified by Trump as “the swamp”. Q explicitly inscribes its actions in Trump’s plan to “drain the swamp” (Blommaert & Procházka, 2019).

The uptake of the article on social media: the case of Twitter and Reddit

This is a screenshot of Jordan Sather's tweet sharing the QAnon article. Sather is what we call the "anti-program" in the context of digital issue mapping, and he shows us how he wants to undermine Sommer's journalistic professionalism and credibility by calling the final product "shit" and "easy". One might also notice the prominence of the figures (number of likes and retweets), accounting for Jordan Sather as a dominant voice among the QAnon community (or QArmy, as they call themselves).

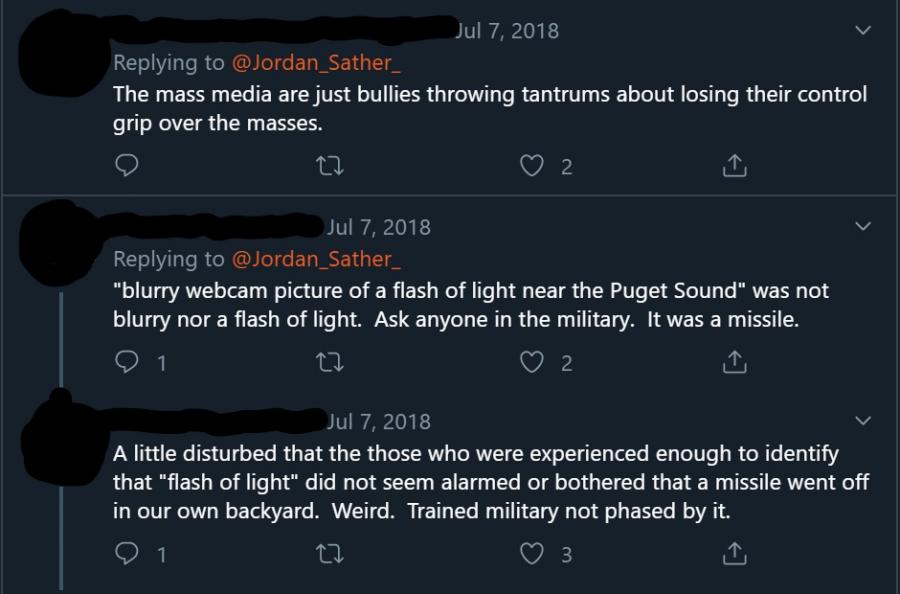

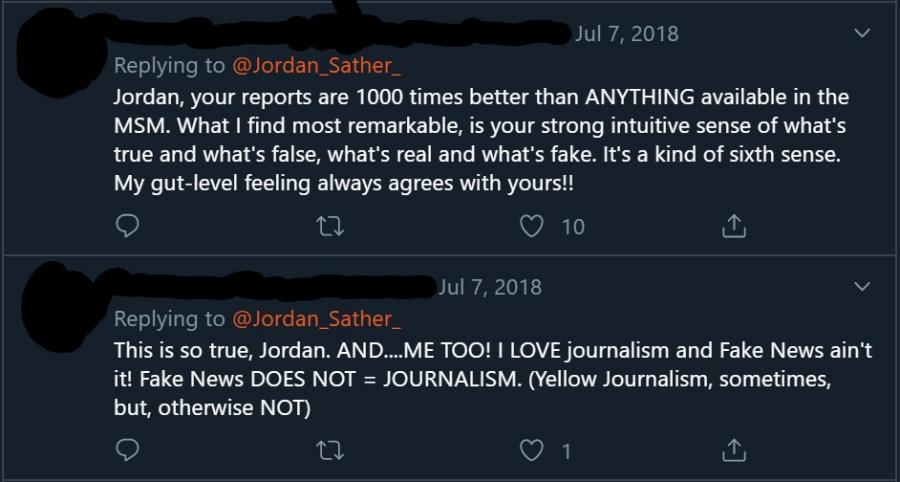

Comments from different users often use derogatory terms aimed at undermining the credibility of mainstream media (as can be expected from the skeptical view of mainstream newsmedia adopted among the group). Others also sarcastically praise Sather or even reply to parts of the original article, as shown in the following screenshots from Twitter.

Others yet empathize with being college drop-outs like Sather.

But we also have rare cases of disagreement coming from people outside the circle, writing "Jordan is not a journalist he's a creative fiction writer, and a pretty damn good one" or even "You are a great example of an autodidactic iconoclast".

On the other side of the spectrum (what we call the "program" in the context of digital issue mapping) we have the author of the article Will Sommer himself, who shared it on Twitter with the caption "Here's the QAnon explainer you've been waiting for. Behold: the conspiracy theory eating the right-wing internet". Looking at the following screenshots, we can say the writer is committed to the cause of updating its followers on "new beliefs" about QAnon.

Adding to this, there is a contribution from an academic.

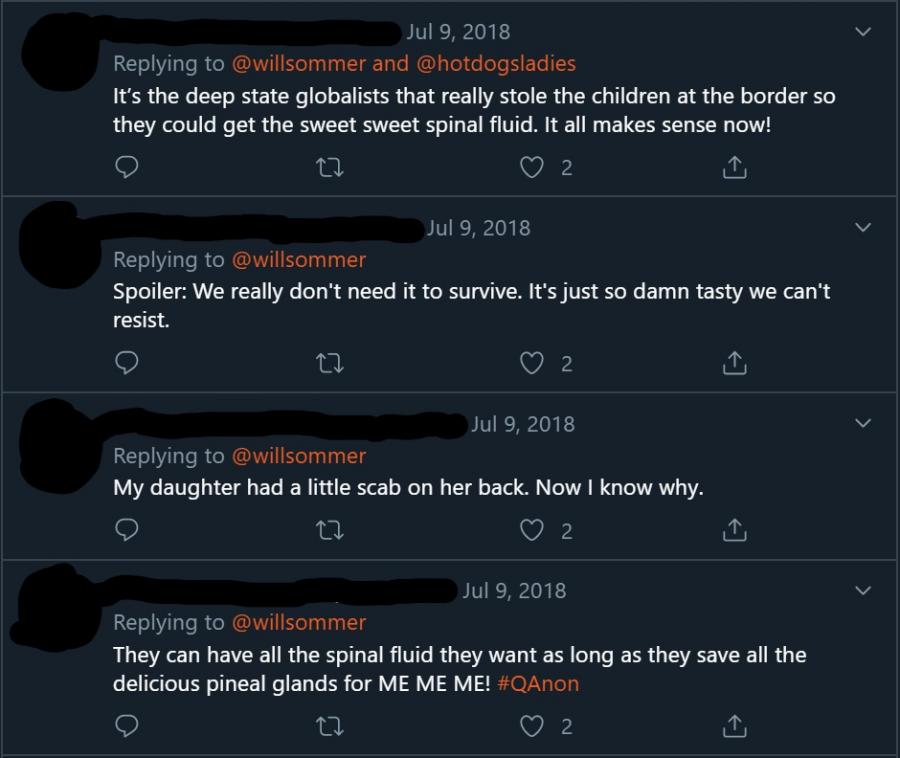

Disbelief is expressed by many with comments like "I just don’t get how a person can read this crazy bullshit and think “yep, that is exactly what’s happening”" and "What?! The crazy level is escalating quickly". But in this case, the most prominent comments to the update are sarcastic and derogatory, as people choose to simply make fun of Qanon's beliefs as the screenshot below shows. Moreover, a specific comment links the update about the "spinal fluid" directly to an episode of South Park, an American adult animated sitcom.

Looking at another popular website, we see that opinions about the article on Reddit hypothesize that a troll is behind the Q account (instead of an actual US government insider, as believed by the QArmy). They call out "nonsense" and attack QBelievers as "dumbshits" or "idiots", saying: "(they) think this shit is real, despite almost all of it being verifiably and demonstrably false. But these are the same dumbshits that still insist there was a child sex ring being run out of the basement of a building with no basement, and that believe Donnie Twoscoops is doing a good job, so they aren’t really bastions of reason, or anything resembling intelligence" (referring to the pizzagate conspiracy theory).

Another user shares an opinion on why people would believe in the conspiracy: "The reason people believe this nonsense isn't because they are fairly weighing the evidence and they're just dumb and can't do it properly. It's because believing these things makes them feel special [...] they get "special" information that makes them more privileged than others".

On Reddit, compared to Twitter, in spite of the use of some malicious words, we can at least notice an argumented opinion (to a certain extent) formulated alongside one's disbelief.

Confirmation bias on social media

This brings us back to the question of how we can compare reactions of American citizens to the same article - an article aimed at explaining what QAnon is - which they have made an issue out of. To tackle this, I analyzed the social media profiles of Jordan Sather and Will Sommer on Twitter, two people who decided to share the article having opposing views on the subject.

The conclusion is that, ultimately, the article does not contribute to finding middle ground between the two oppositional discourses. As a matter of fact, the article only confirms people’s preconceived opinions and beliefs as audiences react by attacking the other party in one way or another, using spiteful language and sarcasm. On the one hand, we have debunkers and people who hear about Qanon for the first time. For them, reading the article only confirms the (expected) craziness of the theory, as framed by the title of the article itself. On the other hand, we have the QArmy and people skeptical of the media. We can see from the Twitter comments that they make fun of journalists and that they use irony to ridicule the article. This is what we call confirmation bias, "the tendency to search for, interpret, favor, and recall information in a way that affirms one's prior beliefs or hypotheses" (Wikipedia, n.d.). In this case, by reading the article people only look for proof that confirms what they already thought was true.

References

Blommaert, A. & Procházca, O. (2019). Ergoic framing in New Right online groups: Q, the MAGA kid, and the Deep State theory. Tilburg Papers in Culture Studies nr. 224.

Entman, R. M. (1993). Framing: Toward clarification of a fractured paradigm. Journal of Communication, 43(4), 51-58.

Maly, I. (2019). New Right Metapolitics and the Algorithmic Activism of Schild & Vrienden. Social media & Society. https://doi.org/10.1177/2056305119856700

Maly, I. (2016). 'Scientific nationalism'. N‐VA and the discursive battle for the Flemish nation. Nations and nationalism. https://doi.org/10.1111/nana.12144

Sommer, W. (2018, June 7). What Is QAnon? The Craziest Theory of the Trump Era, Explained. The Daily Beast.

Valverde, M. (2018, August 3). QAnon and Donald Trump rallies: What's that about?. Politifact.

Varis, P. (2018, November 1). Conspiracy theorizing online. Diggit Magazine.

Vox (2017, August 30). Why fact-checking can’t stop Trump’s lies.

Wikipedia. (n.d.). Confirmation bias. Retrieved on 18 November 2019.